What Doctors Do When Your Case Is Too Complicated

Large language models are entering medicine quickly. But when cases get difficult, many physicians still rely on something older and often more powerful: each other.

Every doctor eventually encounters a case that stops them in their tracks.

Maybe it’s a rare autoimmune disease.

Maybe an unusual infection.

Maybe a complication that doesn’t show up in textbooks.

Medicine is too large for any one physician to master completely.

That’s where Figure1 comes in.

Most patients have never heard of it because the platform is designed for healthcare professionals. Think of it as a global clinical forum where physicians post difficult cases and get input from colleagues across specialties and countries.

Unlike large language models like OpenEvidence, Figure1 is not an AI tool. It’s a community of human physicians sharing real-world experience to help solve difficult clinical problems.

And sometimes that collective knowledge can make all the difference for a patient.

Solving Hard Cases

Imagine you have a really complicated rheumatology case, such as a retroperitoneal fibrosis.

There isn’t much literature on it and the world’s leading experts on the topic are busy being leading experts - so you have to manage it yourself.

While many believe that LLMs have the answers, personally I’ve mostly gotten bad or biased advice from them when managing my patients.

But perhaps that’s a user error.

Fortunately, I can take a case like that and post it on Figure1 and get the input from doctors all over the world, from all different specialties.

Time Needed to Post

The average U.S. doctor has less than 15 minutes with a patient.

The rest of the time goes to documentation, prior authorizations, and care coordination.

To make a post you have to collect all the right information, get the patient’s consent, formulate the right question, add the right images, post your case, and engage with replies.

The doctors who are doing this are not your average doctor.

But sometimes you need someone who is not average.

Patient Consent & Protected Health Information

Most of the Electronic Health Records are constantly collecting patient data and sharing it with third-party companies to figure out how to squeeze more money out of each patient.

This isn’t a fringe opinion.

Electronic health records were supposed to improve care coordination. In reality, many have become billing and documentation machines.

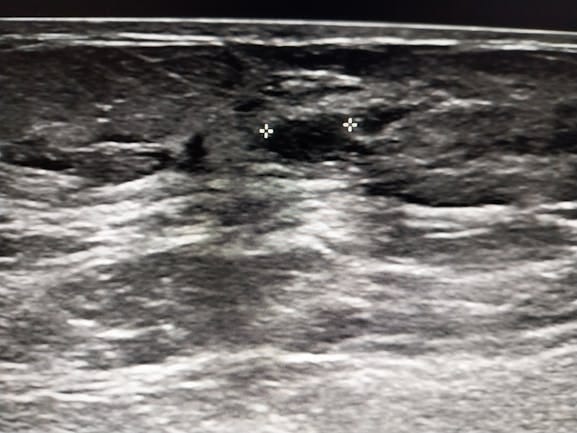

So, what about that xray of the patient’s foot? Well, it belongs to the patient, no doubt. Your data should be your data. But the problem is that it’s not, right? Large medical group and insurance companies are using it without your expressed permission.

When I take your foot x-ray and post it to get clinical feedback, that’s considered disclosing protected health information. Even if the intention is good, I need direct patient consent.

This makes the work much harder. Because now I am exposed to risk and have to find my patient and not only get their permission but I have to get permission from my medical group to use the image which was taken on their premises.

It’s messy and it’s hurting patients. And it doesn’t need to be.

Learning From International Doctors

When is that last time you read about treating leishmaniasis or MDR malaria? Or how to manage a cancer patient when you don’t have access to an MRI?

The cumulative knowledge of 10 doctors is a very powerful force when it comes to patient care.

Sometimes you have to turn to other countries because your patient can’t afford a CT scan or a certain medication.

How do they do it?

How do they choose when they don’t have all the necessary information?

Figure1 vs. LLMs

LLMs = pattern recognition from literature.

Figure1 = real physicians discussing real cases.

Figure1 has been around a lot longer than LLMs. But LLM AIs have penetrated healthcare so easily. Hardly anyone has batted an eye.

Patients are being recorded, their data is being scraped by AI, and AI is even making diagnoses in the EHR.

The reason LLMs have won over case forums like Figure1 is that LLMs are considered unbiased and aren’t humans.

An AI will digest information from a patient’s chart and it won’t go disclosing that data to another person.

But it will take the information and use it as training data (for free) and also aggregate that data to help make better financial decisions when it comes to patient care.

I guess, I would want things to be more equitable, more fair. Let LLMs rise but don’t silence far better existing tools under the pretence that we’re protecting sensitive patient information.

How Does Your Doctor Expand Their Reach?

The next time you feel chummy with your MD, ask them what they do when they have a tough case. Perhaps they nudge a colleague or present the case in a grand round.

Working in a silo will prevent me from becoming the best doctor I can be. I won’t grow and I’ll only be semi-effective for my patients.

Of course, we first need to develop the vulnerability with our patients to honestly answer such a question. But hopefully you have that kind of relationship with your doctor. If not, it’s worth it to start forming that.

Disclaimer:

Dr. Mohammad Ashori is a U.S.-trained family medicine physician turned health coach. The content shared here is for education and general guidance only. It is not personal medical advice, diagnosis, or treatment, and it does not create a doctor–patient relationship. Humans are complicated and context matters. Always talk with your own healthcare team before making medical decisions, changing medications, or ignoring symptoms. This information is to help you add more depth to those conversations.

Follow me on:

Instagram

YouTube

Facebook

Reddit